ML vs DL vs GenAI: Building a Strong AI Career Foundation

FREEGenerative AI can feel like a shortcut: type a prompt, get a polished answer, ship a demo. But real projects don’t reward “cool outputs”. They reward reliable systems.

Sooner or later, the same questions show up:

- Why is the model confidently wrong?

- How do we measure quality?

- What happens when data changes?

- How do we control risk (privacy, compliance, safety)?

If you can answer those questions, you’re not just using AI—you’re building it.

This article breaks down the differences between Machine Learning, Deep Learning, and Generative AI, and explains why ML fundamentals remain the critical differentiator for senior engineers.

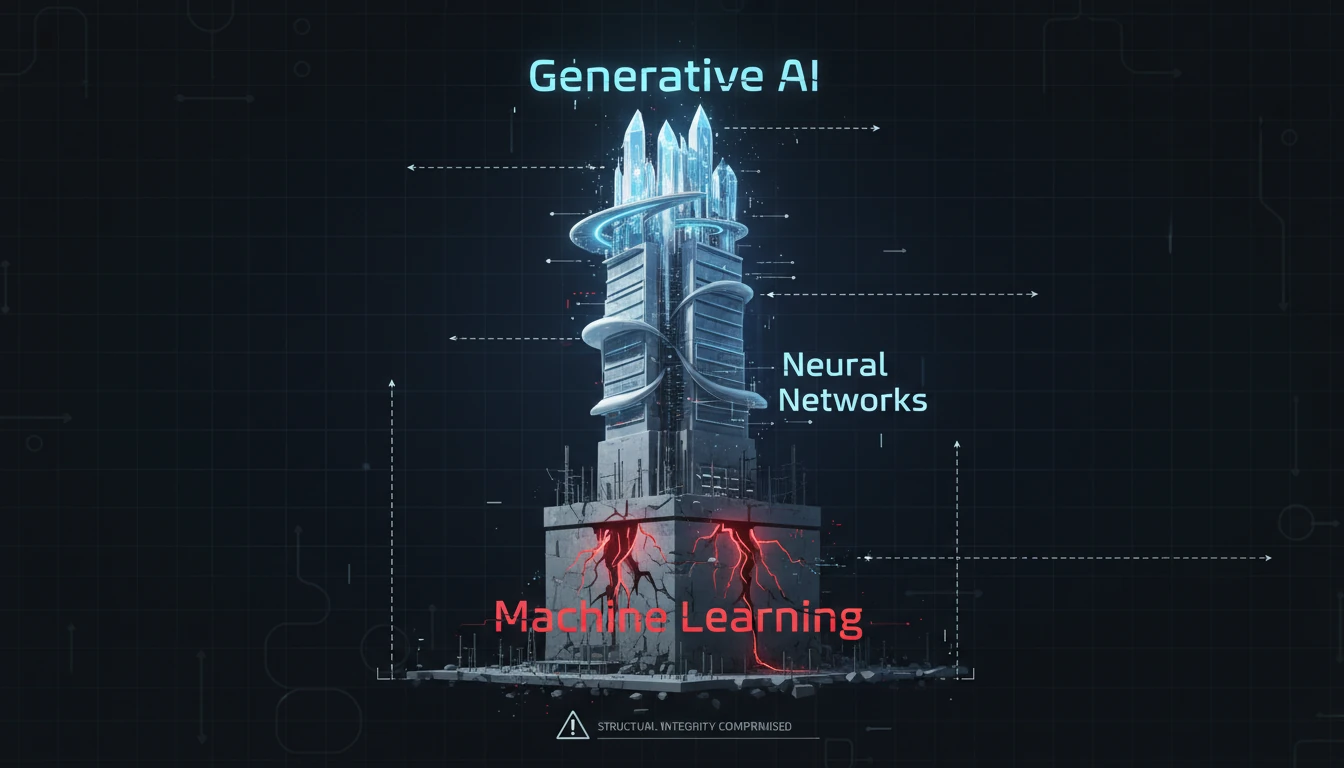

Why is Your Generative AI Dream Built on a Shaky Foundation?

It’s tempting to dive straight into prompt engineering and think: “If I can get great outputs, I’m ready.”

But that’s like an aspiring architect focusing only on interior design while ignoring structural engineering. The penthouse may look amazing… until the building starts cracking.

Here is a real-world example of why foundations matter:

Field Note: The "Perfect" Demo That Failed in Production

I once audited a legal-tech startup that built a GenAI contract analyzer. Their demo was flawless: you uploaded a PDF, and it summarized the liability clauses perfectly. Investors loved it.

But when they onboarded their first enterprise client, the system collapsed. The client's contracts were scanned images, not digital text. The GenAI model hallucinated clauses because the OCR (a classic Machine Learning task) was noisy. The team had spent 100% of their time prompting the LLM and 0% handling the data ingestion pipeline.

The Fix: We didn't change the prompt. We built a robust computer vision pipeline (Deep Learning) to clean the documents before they ever reached the LLM. The error rate dropped by 80%.

Generative AI is the roof. Machine Learning is the foundation.

If you skip the foundation, you can build a great demo, but you cannot build a reliable product.

What Are the Core Differences: Machine Learning, Deep Learning, and Generative AI?

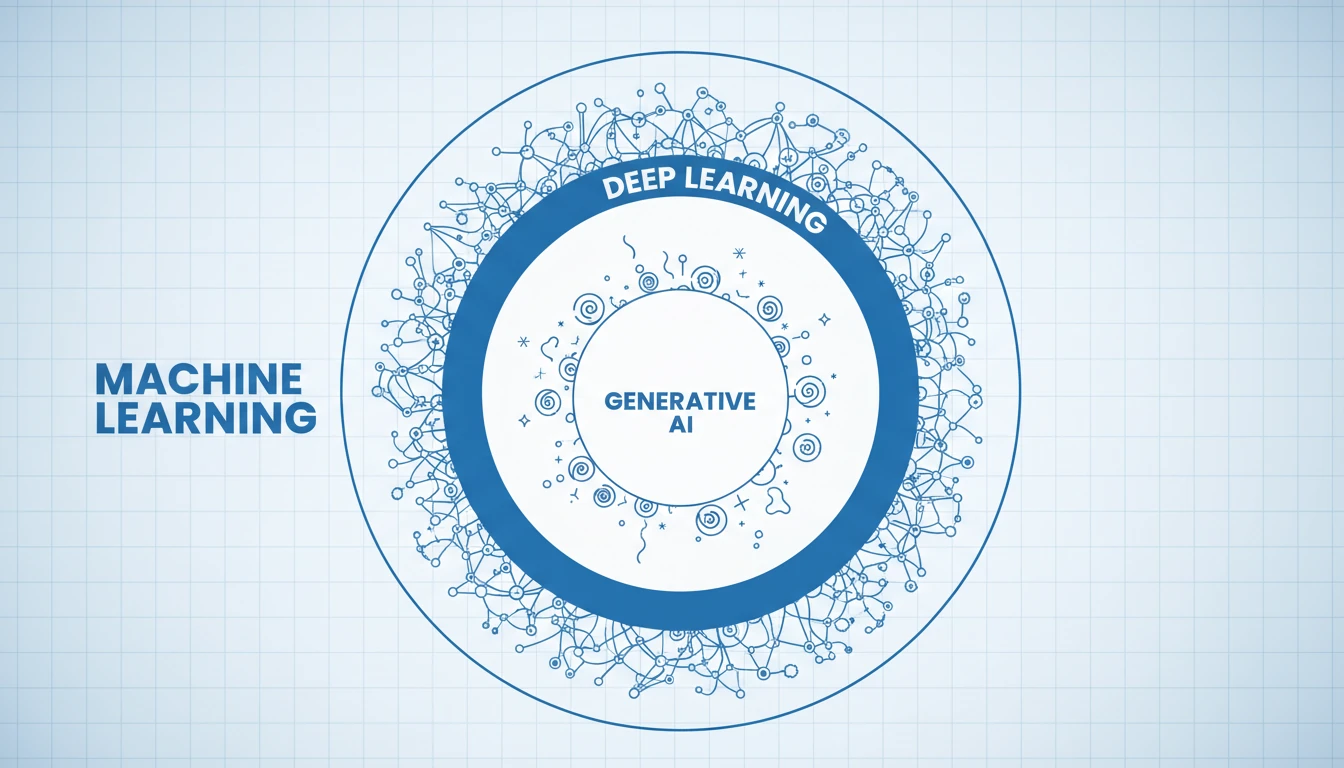

To understand where Generative AI fits, think of nested layers (or Russian nesting dolls):

- Machine Learning (ML) is the broad umbrella.

- Deep Learning (DL) is a subset of ML.

- Generative AI (GenAI) is a subset of DL.

Here’s the simplest breakdown.

Quick Comparison Table

| Topic | Machine Learning (ML) | Deep Learning (DL) | Generative AI (GenAI) |

|---|---|---|---|

| Main goal | Predict / decide | Learn complex patterns | Generate new content |

| Typical data | Mostly structured | Mostly unstructured | Massive corpora (often multimodal) |

| Common tasks | churn, fraud, forecasting | vision, speech, NLP | chatbots, summarization, image generation |

| Example models | logistic regression, XGBoost | CNNs, Transformers | LLMs, diffusion models |

| Main risks | leakage, bias, drift | cost, stability | hallucinations, safety, eval |

The Architectural Blueprint

Machine Learning (ML): The Foundation

ML is any system that learns from data to make predictions or decisions without being explicitly programmed. Think spam filters, fraud detection, churn prediction, pricing, demand forecasting. ML is often efficient and strong on structured data—but it forces you to learn the essentials: data quality, evaluation, and reliability.

For a deeper dive: Machine Learning vs Deep Learning in detailDeep Learning (DL): The Structural Core

DL uses multi-layer neural networks to learn patterns from large, complex, often unstructured datasets. It’s great for images, audio, and text. This is where GPUs, training stability, and experimentation discipline become part of the job.Generative AI (GenAI): The Penthouse Suite

GenAI is deep learning focused on creating new content: text, images, code, audio. Models learn patterns so well they can generate novel outputs. But with that power comes risk: outputs are open-ended, evaluation is harder, and failures can be subtle.

If you want a broader view of generative methods: explore GANs

Key Takeaway: GenAI ⊂ Deep Learning ⊂ Machine Learning. You can’t build the penthouse without the foundation.

How Do Machine Learning Fundamentals Impact Generative AI Success?

A strong GenAI system is not “prompt + model”.

It’s a pipeline: data → context → generation → evaluation → monitoring.

That pipeline is pure Machine Learning thinking.

Below are the two ML foundations that most GenAI projects underestimate.

The "Garbage In, Garbage Out" Principle

ML teaches a harsh rule: if your inputs are messy, outputs will be messy—no matter how powerful the model is.

In GenAI, “good inputs” usually means:

- choosing the right sources (what is allowed? what is trusted?)

- cleaning documents (duplicates, outdated info, inconsistent terms)

- chunking and indexing content for retrieval (RAG)

- designing structured inputs when needed (schemas, tables, normalized fields)

Classic feature engineering is still relevant (especially for structured problems).

But in modern GenAI apps, the biggest “feature engineering” often looks like:

grounding and context design.

If you feed raw, confusing data to a model, you get confident nonsense back.

If you feed clean, scoped, well-grounded context, quality improves dramatically.

Measuring What Matters

GenAI outputs often sound right. That’s the danger.

In ML, you learn to ask: “How do we measure success?”

That mindset is non-negotiable in GenAI too.

For classic ML tasks, metrics like precision, recall, and F1 help you understand trade-offs and failure modes.

For GenAI, you usually need a broader evaluation toolkit:

- a small test set of real questions (even 20–50 is a start)

- expected answers, or at least clear acceptance criteria

- human review with a simple rubric (correctness, completeness, tone)

- automated checks (PII leakage, toxicity, policy violations)

- regression tests (did the latest prompt/RAG change make things worse?)

Without evaluation, you’re not improving—you’re guessing.

Key Takeaway: GenAI’s “wow factor” becomes real value only when it’s built on ML fundamentals: clean inputs, grounding, and measurable quality.

Why Do Employers Prioritize Deep Machine Learning Expertise Over Surface-Level GenAI?

If GenAI is the future, why don’t companies hire only “prompt engineers”?

Because most business value comes from the hard parts:

- defining what “good” means

- building data pipelines that don’t break

- grounding answers in reality (and proving it)

- reducing risk (privacy, bias, hallucinations)

- deploying reliably (latency, cost, monitoring)

When a model fails in production, you rarely fix it with “a better prompt”.

You fix it with:

- better data

- better evaluation

- better system design

- better monitoring

That’s why employers prefer AI builders over AI tool users.

And this shows up quickly in interviews: candidates who can explain train/test splits, leakage, overfitting, and evaluation strategy usually stand out—because they can reason about systems, not just outputs.

💡 Real-World Impact: Recommendation Systems Win Quietly

Some of the biggest business wins in tech come from classical ML work like recommendations, ranking, and A/B testing. These systems aren’t flashy like GenAI demos—but they drive measurable impact. The same “measurement-first” mindset is what makes GenAI reliable too.

How Can Students Build a Durable Career in AI?

Don’t just open a playground and start typing prompts. Build the engine before you drive the car.

Here is a practical, engineering-first plan.

Master the Basics (Math + Intuition)

You don’t need a PhD. But you should be comfortable with:- vectors/embeddings (high-level understanding)

- probability intuition (what “likely” means)

- loss functions and optimization basics

Even one focused week helps.

Start with “Boring” Algorithms (They’re not boring in interviews)

Build one classical ML project end-to-end:- spam classifier (logistic regression)

- house price prediction (linear regression)

- customer segmentation (K-means)

- simple recommender (collaborative filtering)

Bonus challenge: implement a basic model from scratch (no scikit-learn) to understand what’s happening.

Add GenAI the Right Way (As a layer, not as a replacement)

Once the ML core works, enhance it with GenAI:- generate explanations

- summarize results

- create user-facing text

- build a Q&A layer with retrieval (RAG)

Evaluate Like an Engineer

Don’t ship a “vibe-based” system.

Create a small test set, define a rubric, run regression tests, track failures.

If you want a structured deep dive:

Here’s a simple portfolio path that looks great to employers:

- Build with Classical ML First

Create a real project from scratch (e.g., a movie recommender). - Enhance with GenAI

Use an LLM to generate summaries or explanations for users. - Evaluate with ML Principles

Show how you measured improvement, found failures, and iterated.

Your next step is simple: pick one foundational ML project and finish it end-to-end. That’s how you build a career that lasts.

FAQ

Tip: Each question below expands to a concise, production-oriented answer.

Is "Prompt Engineering" a viable long-term career?

By itself, probably not. As models get smarter, they need less manual prompting. However, "AI Engineering"—which combines prompting with system design, evaluation, and data pipelines—is a massive career growth area. Prompting is just one tool in the toolbox, not the whole job.

Can I skip classical Machine Learning and go straight to GenAI?

You can, but you will hit a ceiling. Without understanding concepts like overfitting, data leakage, and evaluation metrics, you will struggle to debug GenAI systems when they fail. The best AI engineers use GenAI for the "last mile" of value, but rely on ML principles for the "first mile" of reliability.

Do I really need to learn the math (Calculus, Linear Algebra)?

For applied roles, you don't need to derive gradients by hand. But you do need intuition about vector spaces (embeddings) and probability (sampling metrics). If you treat models purely as "magic boxes," you won't know how to fix them when the magic breaks.

References

- Google Machine Learning Crash Course - The gold standard for free, interactive ML education.

- Deep Learning Specialization - Andrew Ng's foundational course for understanding neural networks.

- Generative AI Learning Path - Google Cloud's technical deep dive into LLMs and diffusion models.

- Attention Is All You Need - The original research paper (Vaswani et al., 2017) that introduced the Transformer architecture.

- NVIDIA Deep Learning Institute - Hands-on labs for GPU-accelerated computing.